Our Latest News and Insights

Web search APIs in Europe: Which one should you choose?

Company

Company

Web search APIs in Europe: Which one should you choose?

Most web search APIs powering AI workflows today are US-based. For many organizations in Europe – particularly in the public sector and regulated industries – this creates a practical constraint.

Company

Linkup powers real-time web access for LightOn's secure AI platform paradigm

We're excited to announce a strategic partnership with LightOn, a European leader in secure AI for sensitive data.

Company

Product

Linkup: web search for AI agents, no human account needed

Today, Linkup adds support for x402, the open payment protocol developed by Coinbase.

Product

Company

Your Open-Source Model's Knowledge Is Already Stale (how to fix it with Baseten + Linkup)

Open-source models can reason, write, and orchestrate complex tool calls – but their knowledge is frozen at training time. Baseten handles model serving and inference at scale; Linkup provides structured, real-time web access purpose-built for AI systems. Together, they give you the flexibility of open-source with the data freshness production applications require.

Company

Insights

Retrieval vs. Reasoning: Where Linkup and GPT fit in your AI stack

Search APIs like Linkup and answer engines like OpenAI operate at distinct layers of the AI stack. Modern systems combine both, but performance depends on identifying when the bottleneck is retrieval versus reasoning – and applying each layer accordingly

Insights

Product

What’s the Best Alternative to the Bing Search API?

Bing Search API is gone. Linkup is the upgrade.

Product

Insights

A Practical Guide to Visibility in AI Search

We keep getting asked how to win AI search – here’s what we’ve learned.

Insights

Company

Linkup raises $10M to build web search for AIs

We’re thrilled to announce that Linkup has raised a $10M seed round led by Gradient to build the Google Search for AIs and released /fast, the world's most accurate sub-second web search API

Company

Product

Google vs. SERP APIs: Why Linkup Users Stay Safe

Why Linkup remains a safe and reliable alternative after Google's lawsuit against SerpApi

Product

Insights

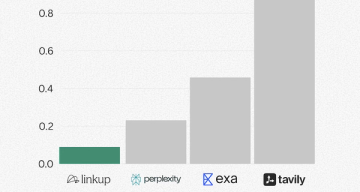

Evaluating AI search systems on complex queries

Source diversity, hallucinations, entity coverage: we benchmarked AI search APIs on Linkup user's queries

Insights